DHT Routing Examples

These examples walk through how a lookup actually works in CAN, Chord, and Dynamo. In all three cases, the starting point is the same: you have a key, you hash it, and you need to find the node responsible for storing the corresponding value. The hash function does the work of mapping the key to a position in the identifier space. From there, routing is purely mechanical.

A Note on Hash Values

A real cryptographic hash, such as SHA-256, produces a 256-bit value (64 hexadecimal digits). For example:

SHA-256("user:alice") =

dabd1db8d35ab13106274f61f1bf977812cce4f477b15014cf38fb796c50a4c4That number is too large to reason about intuitively, so the examples below use truncated 8-digit hex values to represent hashes while keeping the same idea. The mechanics are identical regardless of how many bits the hash produces.

CAN Example: Routing in a 2D Coordinate Space

Setup

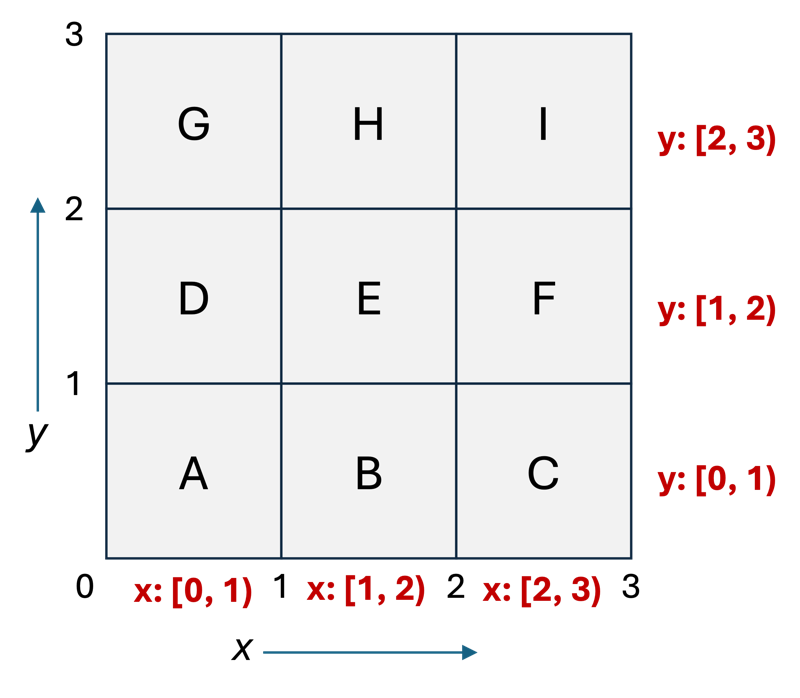

In our example, the 2D coordinate space covers the range [0, 3) on each axis. The value 3 was chosen simply because it gives us a clean 3x3 grid with nine nodes, small enough to draw and reason about, large enough to show multi-hop routing.

In a real CAN deployment, the coordinate space would be much larger, and the zones would not be uniform rectangles. This is just a continuous space: it is not pre-divided into any fixed number of zones. The zone boundaries emerge from how nodes join the system, not from the space itself. In this example, we start with nine nodes already present, and it happens that they have carved out a tidy 3x3 arrangement. A different joining history would produce irregular zone shapes.

These are the zone boundaries for each node in this CAN table:

| Node | x range | y range |

|---|---|---|

| A | [0, 1) | [0, 1) |

| B | [1, 2) | [0, 1) |

| C | [2, 3) | [0, 1) |

| D | [0, 1) | [1, 2) |

| E | [1, 2) | [1, 2) |

| F | [2, 3) | [1, 2) |

| G | [0, 1) | [2, 3) |

| H | [1, 2) | [2, 3) |

| I | [2, 3) | [2, 3) |

Each node knows only its immediate neighbors: the nodes sharing a border with its zone. If the nodes don’t form an even grid, a node may have multiple neighbors on one or more sides. In that case, it still only needs to know one neighbor per side (it doesn’t matter which neighbor).

Hashing the Key

To store the key-value pair ("user:alice", ...), we need a coordinate in the 2D space. CAN requires a unique hash in each dimension. Although the paper does not specify a mechanism for this, one way we can get a hash value per dimension is by appending a dimension label to the key before hashing:

hash_x_("user:alice") = SHA-256("user:alice" + "x") = 0xC4A271F8...

hash_y_("user:alice") = SHA-256("user:alice" + "y") = 0x5E712C04...These are large integers. To map them into our 3x3 coordinate space, we normalize each value to the range [0, 3) by dividing by the maximum possible hash value and multiplying by 3:

x = (0xC4A271F8 / 0xFFFFFFFF) * 3 = 0.750 * 3 = 2.3

y = (0x5E712C04 / 0xFFFFFFFF) * 3 = 0.388 * 3 = 1.2The target point is (2.3, 1.2). Looking at the zone table, x=2.3 falls in [2,3) and y=1.2 falls in [1,2), which is Node F’s zone. That is the node responsible for storing this key.

The Routing

The client sends its lookup to Node A, a randomly chosen node.

At Node A (x=[0,1), y=[0,1)): The target is (2.3, 1.2). Node A checks whether the target falls in its zone. It does not. Node A compares:

-

x=2.3 is greater than x_max_=1, so the target is to the east.

-

Forward to the eastern neighbor: Node B.

At Node B (x=[1,2), y=[0,1)):

-

x=2.3 is greater than x_max_=2, so the target is still to the east.

-

Forward to the eastern neighbor: Node C.

At Node C (x=[2,3), y=[0,1)):

-

x=2.3 is within [2,3). The x coordinate matches.

-

y=1.2 is greater than y_max_=1, so the target is to the north.

-

Forward to the northern neighbor: Node F.

At Node F (x=[2,3), y=[1,2)):

-

Is x=2.3 within [2,3)? Yes.

-

Is y=1.2 within [1,2)? Yes.

-

The target point is inside this zone. Node F is responsible for the key.

The path was: A → B → C → F. Two intermediate nodes, then the answer.

What to Notice

The routing rule at each node is just a series of comparisons: is the target to my east, west, north, or south? No node needs to know anything beyond its own zone boundaries and its immediate neighbors. The query always moves closer to the target, so it always terminates.

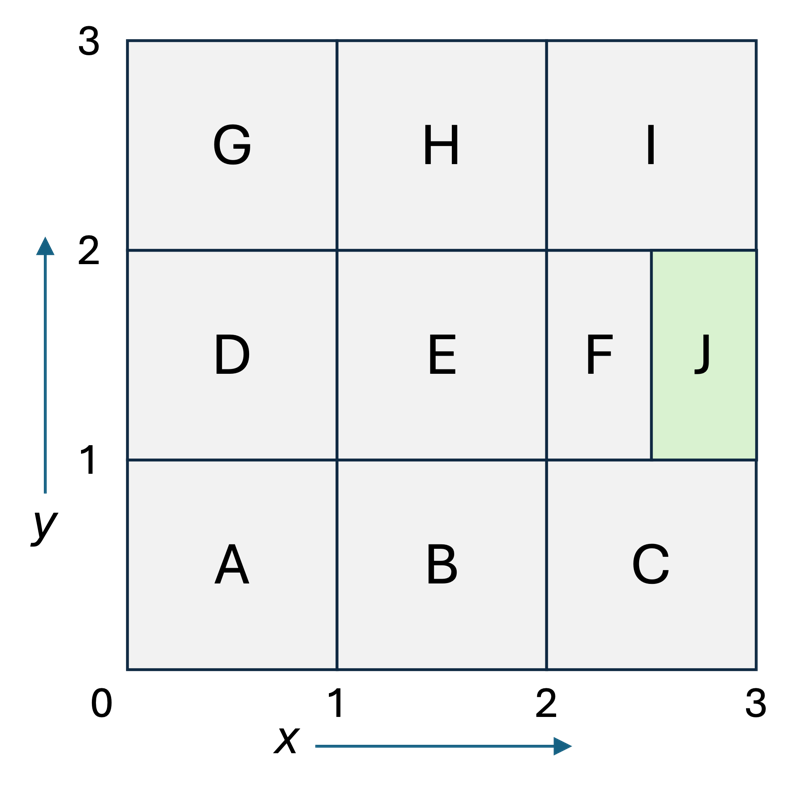

Adding a New Node

Suppose a new node J joins the system. J hashes an identifier (such as its hostname or IP address) to obtain a position in the coordinate space, say, the point (2.5, 1.5), which falls within Node F’s zone. J contacts any existing node, which routes the join request to F using the same greedy algorithm as a lookup.

F and J split F’s zone in half. One natural split along the x-axis gives:

-

F keeps x=[2, 2.5), y=[1, 2)

-

J takes x=[2.5, 3), y=[1, 2)

F transfers to J all key-value pairs whose coordinates fall in J’s new zone. Every other node in the system is completely unaffected. Node G, H, I, D, E, A, B, and C do not change their zones, their neighbor lists (except F and J update their shared border), or any of their stored keys.

This is the point: the coordinate space is continuous and pre-existing. Adding a node does not re-hash anything. It just redraws one boundary line and moves the keys on one side of that line to the new node.

Chord Example: Routing on a Ring

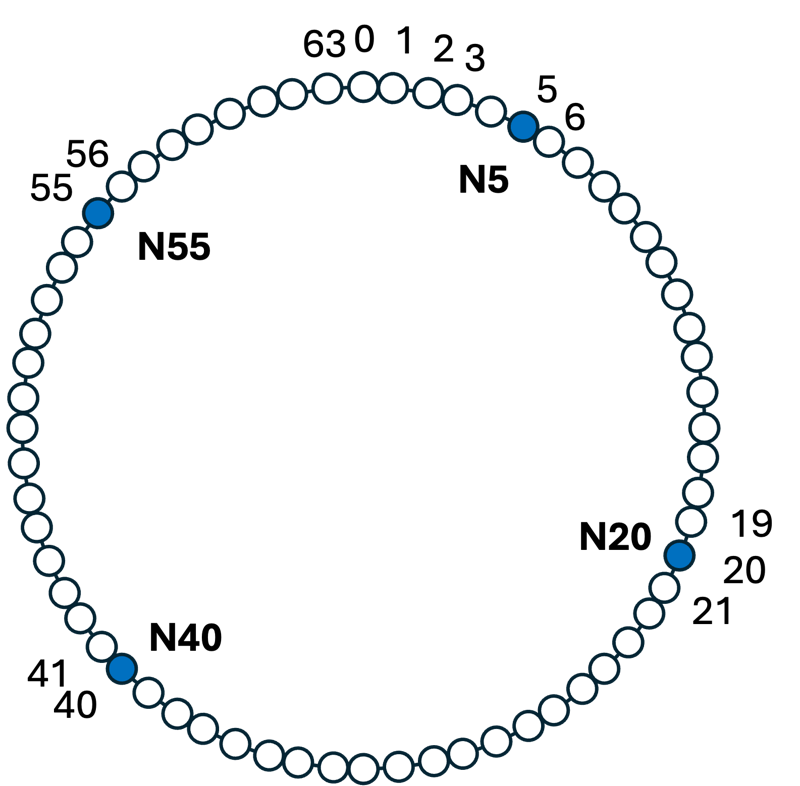

Setup

We use a ring with positions 0 to 63 (a 6-bit identifier space). Again, we are keeping the numbers small. The choice of 64 positions means:

-

The ring can hold at most 64 nodes, since each node needs a unique position. In practice, you want the identifier space to be far larger than the number of nodes you will ever deploy, which is why real systems use 160-bit (SHA-1) or 256-bit (SHA-256) identifiers.

-

A node’s position on the ring is hash(IP address) mod 64.

-

The target position for a key lookup is hash(key) mod 64.

There are four nodes on the ring:

| Node | Position | Owns keys |

|---|---|---|

| N5 | 5 | 56 to 5 |

| N20 | 20 | 6 to 20 |

| N40 | 40 | 21 to 40 |

| N55 | 55 | 41 to 55 |

A node owns all keys from just after its predecessor’s position up to and including its own position. The ring wraps around: N5 owns keys 56 through 5 (crossing the 0/63 boundary).

Each node knows its successor: the next node clockwise. N5’s successor is N20; N20’s successor is N40; N40’s successor is N55; N55’s successor is N5 (wrapping around).

Finger Tables

With successor pointers alone, a lookup might walk around the entire ring one step at a time (O(N) for N nodes). Chord adds a finger table so each node can jump ahead.

The finger table is a short list of node references. Each entry (i) in the table answers the question: “if I need to reach a key that is 2i positions ahead of me on the ring, which node should I forward to?”

The table has exactly k entries, where k is the number of bits in the identifier. For SHA-1 that is 160 entries; for SHA-256 it is 256 entries. The size is fixed and small regardless of how many nodes are in the ring or how large the identifier space is. In our 6-bit example, each node has exactly 6 entries.

Entry i points to the node responsible for position (p + 2i) mod 2k. The table stores the actual node identity and address – not raw ring positions, which would be impractical to work with in a ring with 2160 or 2256 possible positions. The position arithmetic is used only to determine which node to point to when the table is built or refreshed. During a lookup, a node simply scans its list of node references and picks the one closest to the target without overshooting.

In a sparsely populated ring like our four-node example, several consecutive entries may point to the same node – entries 0 through 3 in N5’s table all resolve to N20 because all four offsets land inside N20’s range. This redundancy is expected and harmless. In a densely populated ring, consecutive entries would more often point to different nodes, and the finger table would provide finer-grained jumps.

Node N5’s finger table:

| Entry i | Offset 2i | Target position (5 + 2i) | Responsible node |

|---|---|---|---|

| 0 | 1 | 6 | N20 |

| 1 | 2 | 7 | N20 |

| 2 | 4 | 9 | N20 |

| 3 | 8 | 13 | N20 |

| 4 | 16 | 21 | N40 |

| 5 | 32 | 37 | N40 |

Node N40’s finger table:

| Entry i | Offset 2i | Target position (40 + 2i) mod 64 | Responsible node |

|---|---|---|---|

| 0 | 1 | 41 | N55 |

| 1 | 2 | 42 | N55 |

| 2 | 4 | 44 | N55 |

| 3 | 8 | 48 | N55 |

| 4 | 16 | 56 | N5 |

| 5 | 32 | 8 | N20 |

Hashing the Key

To look up the key "products/laptop":

SHA-256("products/laptop") = 0x2FC8B1A3... (truncated for illustration)

mod 64 = 47Key hash = 47. Looking at the ownership table, 47 falls in the range 41-55, which belongs to N55.

The Routing

The query starts at Node N5.

At N5 (owns 56-5): Key 47 is not in N5’s range. N5 scans its finger table for the largest entry that is still less than 47 and greater than 5 (its own position). The entries resolve to N20 (positions 6-20) and N40 (positions 21-40). N40 at position 40 is the closest without overshooting 47. Forward to N40.

At N40 (owns 21-40): Key 47 is not in N40’s range (21-40). N40 checks: is 47 between my position (40) and my successor’s position (55)? Yes. So N40 forwards directly to its successor, N55.

At N55 (owns 41-55): 47 is in the range 41-55. N55 is responsible for this key. Lookup complete.

The path was: N5 → N40 → N55. Two hops.

What to Notice

The finger table lets N5 skip over N20 and jump directly to N40, which is closer to the target. With only four nodes, the savings are modest, but in a ring with a million nodes, a full successor walk would take up to a million hops. Finger tables reduce that to about 20 hops (log2 1,000,000 ≈ 20).

Also notice that no node needs to know the full ring. Each node knows only its own range, its successor, and its finger table entries. The query gets routed to the right place purely by each node making a local decision.

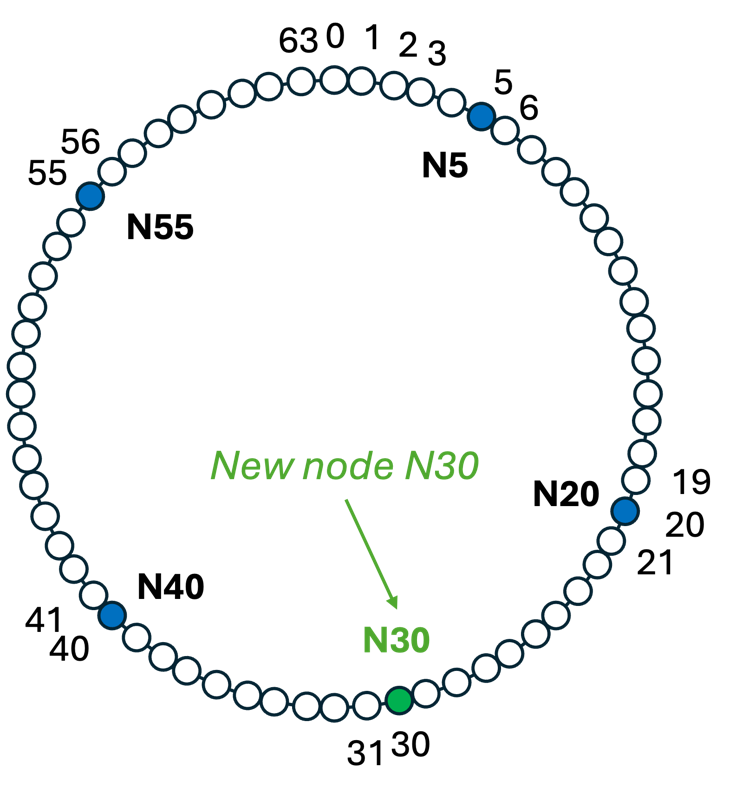

Adding a New Node

Suppose a new node N30 joins the ring. Its identifier hashes to position 30, which falls in N40’s range (21 to 40).

N30 contacts any existing node, which routes the join to N40. N40 hands over responsibility for keys 21 to 30 to N30, and keeps keys 31 to 40 for itself. The new ownership table is:

| Node | Position | Owns keys |

|---|---|---|

| N5 | 5 | 56 to 5 |

| N20 | 20 | 6 to 20 |

| N30 | 30 | 21 to 30 |

| N40 | 40 | 31 to 40 |

| N55 | 55 | 41 to 55 |

N20’s successor pointer updates from N40 to N30. N30’s successor is N40. Every other successor pointer in the ring is unchanged. Finger tables are updated in the background by the stabilization protocol.

N5, N20, and N55 do not move any keys. The keys that move are only those in positions 21-30, and they move from N40 to N30. Nothing else changes.

Dynamo: No Finger Tables, No Hops

Chord’s finger tables are an elegant solution to a specific problem: in a peer-to-peer network with millions of nodes and no central authority, no node can afford to know the full membership of the ring. Finger tables let each node store only O(log2 n) references while still guaranteeing O(log2 n) lookup.

Amazon Dynamo operates under different constraints. It runs in a controlled environment, potentially spanning multiple data centers for fault tolerance and supporting thousands of virtual nodes. However, membership is managed administratively rather than open to arbitrary participants. Nodes join and leave under Amazon’s control, not at the whim of anonymous peers. Membership changes are infrequent and propagate reliably through gossip. (periodic announcements). In that environment, every node can afford to maintain a complete map of the ring.

Rather than using finger tables, each Dynamo node stores the full membership: the hash range that every other node is responsible for.

When a request arrives, the receiving node computes the target position from the key’s hash, looks up which node owns that range in its local table, and forwards the request directly. This is a single network hop regardless of ring size: an O(1) lookup.

Using our four-node ring as an example, every node would hold a table like this:

| Hash range | Responsible node |

|---|---|

| 56 to 5 | N5 |

| 6 to 20 | N20 |

| 21 to 40 | N40 |

| 41 to 55 | N55 |

A request arriving at N5 for key hash 47 does not need to route through any intermediate node. N5 checks its local table, sees that 47 falls in the range 41-55, and sends the request directly to N55 in one hop.

This is what the Dynamo paper means when it describes Dynamo as a zero-hop DHT. The trade-off is straightforward: each node uses more memory (a full membership table rather than a finger table with log n entries) and the gossip protocol must propagate membership changes to every node. In exchange, routing latency is eliminated entirely. For a latency-sensitive service like a shopping cart, that is a trade worth making.

Both systems route a query from an arbitrary starting node to the node responsible for a key, using only local information at each hop. The difference is the geometry.

In CAN, the identifier space is multi-dimensional, and routing moves toward the target by correcting one coordinate at a time. In Chord, the identifier space is a one-dimensional ring, and routing jumps ahead using exponentially spaced finger table entries.

In Dynamo, every node holds the complete membership table, so any node can forward a request directly to the responsible node in a single hop. CAN and Chord are designed for open, decentralized networks where no node can know the full membership. Dynamo trades that generality for O(1) routing by requiring every node to maintain full ring knowledge, which is a practical and optimal choice when membership is managed and propagated via gossip in a controlled environment.